As AI video generation becomes more advanced, users expect higher quality, longer clips, and fewer limitations. Yet many creators using Sora have noticed a curious restriction: videos generated at 352p resolution are limited to just 15 seconds. This constraint can feel puzzling—especially since lower resolutions typically require fewer resources. So why does this limit exist, and more importantly, how can it be fixed or worked around?

TLDR: Sora limits 352p videos to 15 seconds primarily due to computational balancing, model optimization, and platform resource management. Even though 352p is low resolution, video generation depends more on frame consistency and temporal modeling than pixel count alone. The limit helps maintain system stability and fair usage across users. Fixing it involves either upgrading plan tiers, optimizing prompts, segmenting video generation, or using alternative workflows.

Let’s break down what’s really happening behind the scenes—and what creators can do about it.

Understanding the 352p Limitation

At first glance, it seems counterintuitive. Lower resolution should mean smaller file size and faster rendering, right? While that’s partially true, AI video generation is far more complex than traditional video compression.

Here’s what matters:

- Temporal consistency (how frames relate to each other over time)

- Motion modeling across sequences

- Memory bandwidth during processing

- Model stability over longer clips

Even at 352p, generating 24–30 frames per second over longer durations requires sustained GPU memory and compute power. The limitation isn’t just about pixel count—it’s about maintaining coherent scene evolution across potentially hundreds of frames.

When Sora enforces a 15-second cap at 352p, it’s essentially:

- Preventing system overload during peak usage

- Maintaining consistent output quality

- Balancing server resources among users

- Reducing long-horizon model drift

The lower resolution does reduce per-frame complexity, but it doesn’t reduce the complexity of time-based prediction. That’s the key distinction.

The Real Technical Reason: Temporal Load vs Pixel Load

Most people think resolution is the primary driver of resource usage. In traditional rendering, that’s mostly accurate. However, in generative AI video systems like Sora, what taxes the model most heavily is:

How many sequential frames it must intelligently predict while maintaining narrative and visual coherence.

Consider this simplified breakdown:

| Factor | Impact on System Load |

|---|---|

| Resolution (352p) | Moderate GPU memory use per frame |

| Frame rate (30 FPS) | Multiplies frame processing load |

| Duration (15+ seconds) | Exponentially increases temporal complexity |

| Movement complexity | Increases attention and prediction demand |

For example, a 15-second clip at 30 FPS equals 450 frames. Extending that to 60 seconds increases the total to 1,800 frames. Each frame depends on previous frames.

The model must “remember” and maintain spatial continuity across all of them—this is computationally expensive.

Why 352p Is Still Limited Despite Being “Low Quality”

You might assume that lowering resolution should allow more duration. To some degree, it does—but only up to the point where temporal modeling becomes the bottleneck.

Important factors include:

- Model architecture constraints — The AI may have a fixed context window for frames.

- VRAM ceilings — Servers have memory caps to serve multiple users simultaneously.

- Cost control mechanisms — AI video generation is expensive to operate.

- User-tier restrictions — Some plans intentionally limit output length.

It’s not only about what’s technically possible—it’s also about what’s sustainable at scale.

Image not found in postmeta

Platforms like Sora must ensure:

- Fair distribution of compute resources

- Stable generation speeds

- Predictable system performance

- Cost-efficient infrastructure usage

Allowing unlimited low-resolution generation could still clog servers if thousands of users generated long clips simultaneously.

Is This a Quality Protection Mechanism?

Yes—and no.

Longer clips introduce a risk known as model drift. This happens when:

- Characters subtly change appearance

- Backgrounds morph unintentionally

- Objects disappear or transform

- Lighting shifts inconsistently

By limiting 352p output to 15 seconds, Sora reduces the likelihood of noticeable inconsistencies. Shorter durations make it easier for the model to maintain coherence.

In other words, the restriction isn’t just infrastructural—it’s also about preserving perceived quality.

How to Fix or Work Around the 15-Second Limit

Now to the important part: What can you actually do?

1. Upgrade Your Plan (If Applicable)

Many AI platforms restrict video duration by subscription tier. Higher plans may allow:

- Longer maximum duration

- Higher resolution unlocks

- Priority processing

- Access to extended context models

This is the most straightforward solution if budget permits.

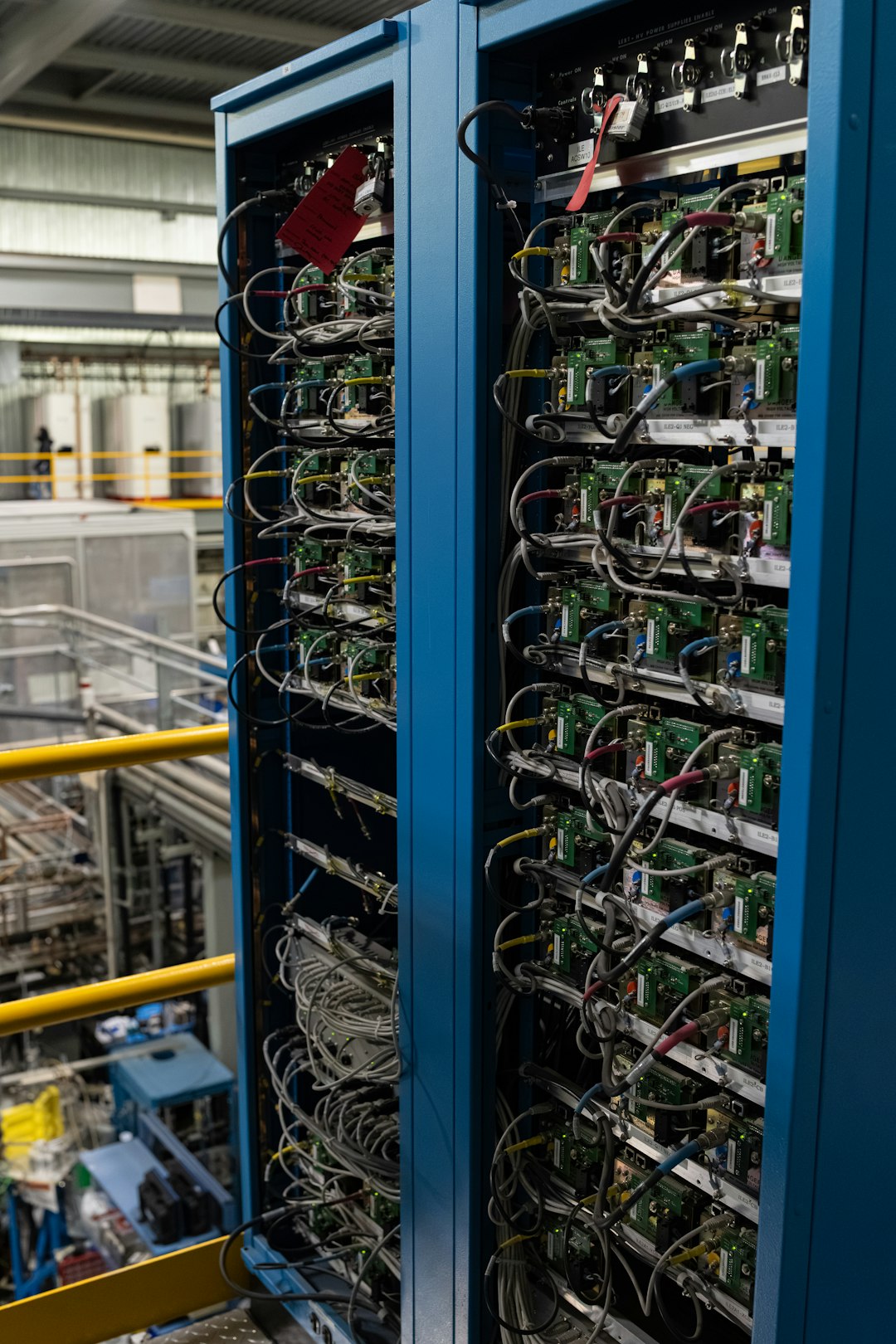

2. Generate in Segments and Stitch Clips Together

This is one of the most effective creative workarounds.

Instead of generating one 60-second video, create:

- Four 15-second clips

- Ensure consistent prompts

- Use scene anchoring phrases

- Stitch in post-production

This approach gives you control and often results in better continuity if done carefully.

Image not found in postmeta

Pro tip:

- End each clip with a natural transition moment.

- Begin the next clip by referencing the previous action in the prompt.

3. Optimize Prompt Design for Stability

Longer generation attempts often fail because the prompt introduces too much complexity.

To improve success rate:

- Limit rapid scene changes

- Avoid introducing new objects mid-sequence

- Keep camera motion simple

- Describe a consistent lighting environment

Simpler prompts demand less temporal recalculation and sometimes allow smoother extended workflows.

4. Increase Resolution Strategically

This may sound counterintuitive, but depending on the system’s architecture, certain resolution tiers may be optimized for different duration caps.

Sometimes:

- Higher tiers may allow longer duration options

- Premium rendering pipelines may allocate more compute

It’s worth testing different resolution settings to see if duration limits vary.

5. Use External Upscaling After Generation

Instead of generating longer low-resolution clips, consider:

- Generative at allowed maximum duration

- Stitching segments

- Upscaling final result with AI upscalers

This leverages optimized systems like:

| Tool | Best For | Strength |

|---|---|---|

| Topaz Video AI | Upscaling generated clips | Sharper detail reconstruction |

| Adobe Enhance | Integrated workflow | Color and noise control |

| DaVinci Resolve | Color grading and stitching | Professional post-processing |

This doesn’t remove Sora’s 15-second limitation—but it helps produce professional extended videos.

Will the 15-Second Limit Be Removed in the Future?

Very likely—at least partially.

AI video models are improving rapidly in:

- Context window expansion

- Memory efficiency

- Compute scaling techniques

- Distributed GPU processing

As infrastructure improves and models become more efficient, we can expect:

- Longer default generation windows

- Dynamic duration scaling

- Better temporal consistency

- Tier-based duration customization

However, there will always be trade-offs between cost, performance, and user access.

The Bigger Picture: AI Video Is Still Evolving

The 352p 15-second limit isn’t a flaw—it’s a reflection of where AI video technology currently stands.

AI-generated video requires:

- Massive GPU clusters

- High energy consumption

- Careful system orchestration

- Intensive model fine-tuning

Every second of generated footage represents thousands of synchronized neural network operations.

The limitation is less about restricting creativity and more about managing an emerging technology responsibly.

Final Thoughts

If you’re frustrated by Sora’s 352p 15-second limit, you’re not alone. But understanding the reasoning behind it changes perspective. The restriction exists primarily due to temporal modeling demands, system-wide resource allocation, cost control, and quality preservation.

Fortunately, creators aren’t powerless. By:

- Segmenting clips strategically

- Optimizing prompts

- Using post-production tools

- Exploring subscription tiers

You can effectively work around the limitation and produce longer, polished videos.

AI video generation is progressing fast. Today’s 15-second cap may become tomorrow’s 2-minute default. Until then, working with the system’s constraints—rather than against them—is often the smartest creative decision.