In today’s data-driven world, access to accurate and timely information can mean the difference between leading the market and lagging behind. From tracking competitor prices to gathering research datasets, businesses and developers increasingly rely on automated solutions to collect data from across the web. That’s where web scraping software like Scrapy comes in—powerful tools designed to extract, structure, and deliver web data efficiently and at scale.

TLDR: Web scraping software like Scrapy allows businesses and developers to automatically extract structured data from websites. These tools save time, reduce manual work, and enable data-driven decisions in fields such as e-commerce, marketing, finance, and research. Popular alternatives to Scrapy include Beautiful Soup, Octoparse, ParseHub, and Apify—each offering different levels of flexibility and ease of use. Choosing the right tool depends on your technical skill level, data needs, and scalability requirements.

What Is Web Scraping Software?

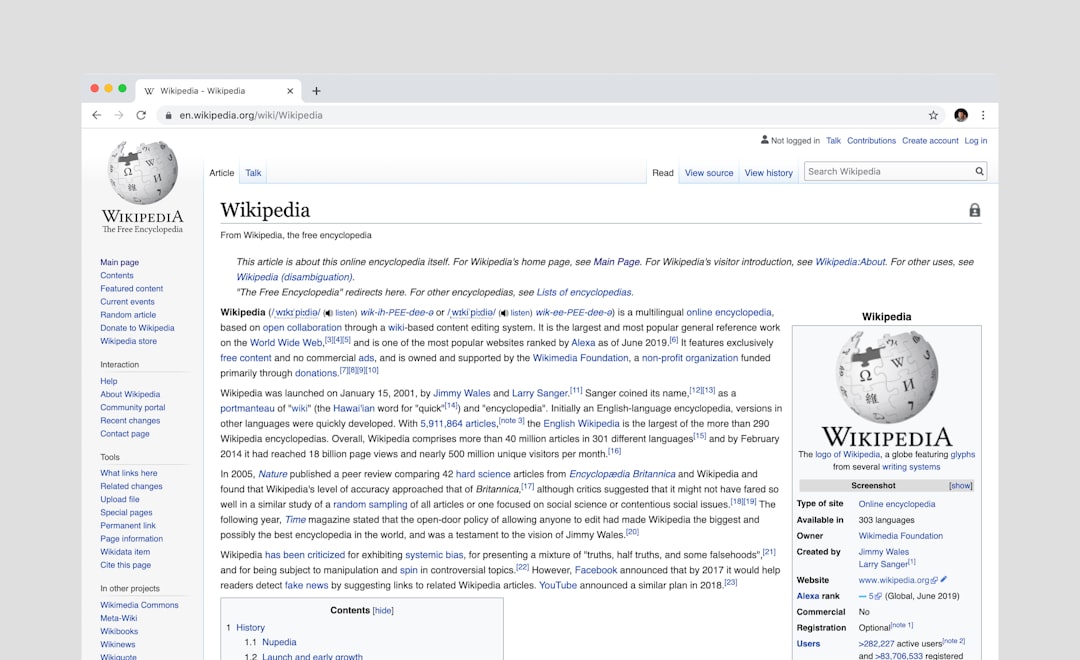

Web scraping software is a tool or framework that automatically extracts information from websites. Instead of manually copying and pasting data, scraping tools send requests to web pages, parse the HTML content, and collect specific data points such as:

- Product prices

- Customer reviews

- Stock market data

- News headlines

- Job listings

- Contact information

These tools transform unstructured webpage content into structured formats like CSV, JSON, or databases, making the data easy to analyze and use.

Why Scrapy Is One of the Most Popular Web Scraping Frameworks

Scrapy is an open-source Python framework specifically designed for large-scale web scraping. It’s fast, powerful, and highly customizable, making it a favorite among developers and data professionals.

Key Features of Scrapy:

- Asynchronous processing for handling multiple requests efficiently

- Built-in data pipelines for cleaning and storing extracted data

- Support for XPath and CSS selectors for precise targeting

- Extensible middleware system for customization

- Robust documentation and community support

Unlike simple scraping scripts, Scrapy operates as a complete framework. It manages request scheduling, throttling, exporting data, and handling errors—all essential for professional-grade scraping operations.

Use Cases: Where Web Scraping Delivers Real Value

Web scraping tools like Scrapy power a wide range of practical business and research applications.

1. E-commerce Price Monitoring

Retailers use scraping tools to track competitor prices and adjust their own pricing strategies accordingly.

2. Market Research

Companies gather public opinion, sales trends, and product feedback across multiple sites.

3. Lead Generation

Businesses extract publicly listed contact information to build prospect databases.

4. Financial Data Collection

Investment firms scrape financial statements, stock prices, and economic indicators.

5. Academic Research

Researchers compile large datasets from online publications, forums, and archives.

Other Web Scraping Tools Similar to Scrapy

While Scrapy is powerful, it’s not the only option available. Depending on your technical expertise and project scale, other scraping tools may be better suited to your needs.

1. Beautiful Soup (Python)

Beautiful Soup is a lightweight Python library for parsing HTML and XML documents. Unlike Scrapy, it is not a full framework—it focuses primarily on data extraction.

- Easy to learn

- Great for small projects

- Works well with Requests library

- Limited scalability compared to Scrapy

2. Octoparse

Octoparse is a no-code scraping tool with a visual interface, making it ideal for non-programmers.

- Point-and-click interface

- Cloud extraction features

- Built-in templates for popular sites

- Subscription pricing model

3. ParseHub

ParseHub is another visual scraping tool that can handle dynamic websites and JavaScript-rendered content.

- Visual project builder

- Handles complex websites

- Desktop and cloud options

- Beginner-friendly

4. Apify

Apify is a cloud-based scraping and automation platform that includes ready-made scrapers and supports custom code.

- Cloud infrastructure included

- API integration

- Automation capabilities

- Scalable for enterprise use

Comparison Chart of Popular Web Scraping Tools

| Tool | Best For | Technical Level | Scalability | Pricing |

|---|---|---|---|---|

| Scrapy | Large-scale custom scraping | Advanced | High | Free (Open-source) |

| Beautiful Soup | Small Python projects | Beginner-Intermediate | Moderate | Free (Open-source) |

| Octoparse | No-code business users | Beginner | Moderate-High | Paid plans |

| ParseHub | Visual scraping of dynamic sites | Beginner | Moderate | Free & Paid tiers |

| Apify | Cloud-based automation | Intermediate-Advanced | High | Paid plans |

How Scraping Software Works Behind the Scenes

At its core, web scraping software follows a structured process:

- Send HTTP request to a target URL.

- Download webpage content (HTML, JSON, etc.).

- Parse the content using selectors or rules.

- Extract specific data elements.

- Store or export data into structured formats.

More advanced tools also handle:

- Session management

- Login authentication

- Proxy rotation

- Captcha handling

- JavaScript rendering

Benefits of Using Web Scraping Software

1. Automation at Scale

Instead of manually gathering data, you can automate extraction across hundreds or thousands of pages.

2. Time Efficiency

Tasks that would take weeks manually can be completed in hours.

3. Competitive Insights

Businesses gain real-time market awareness.

4. Customizable Data Pipelines

Frameworks like Scrapy allow deep customization, enabling users to build tailored workflows.

5. Cost Savings

Automated data collection reduces labor costs and improves operational efficiency.

Challenges and Considerations

While web scraping is powerful, it comes with challenges:

- Website structure changes can break crawlers.

- Anti-bot protections may block automated access.

- Legal and ethical considerations must be respected.

- Dynamic JavaScript content requires advanced handling.

Before scraping a website, always review its terms of service and applicable regulations. Responsible scraping involves respecting rate limits and avoiding unnecessary server strain.

Choosing the Right Tool for Your Needs

Your ideal scraping software depends on several factors:

Technical Skill Level

- If you know Python and need flexibility → Scrapy or Beautiful Soup.

- If you prefer visual tools → Octoparse or ParseHub.

Project Scale

- Small one-off tasks → Lightweight libraries.

- Enterprise-grade scraping → Scrapy or Apify.

Budget Constraints

- Open-source tools offer cost advantages.

- Cloud platforms reduce infrastructure setup costs.

The Future of Web Scraping

As websites grow more complex with heavy JavaScript frameworks and interactive elements, scraping technology continues to evolve. Headless browsers, AI-assisted data extraction, and intelligent automation systems are becoming increasingly common.

Machine learning is also improving scraping accuracy—automatically identifying patterns and adapting to layout changes. Meanwhile, cloud-based scraping platforms are making it easier than ever to deploy scalable data extraction pipelines.

For businesses aiming to stay competitive, web scraping is no longer a niche technical trick—it’s a strategic advantage.

Final Thoughts

Web scraping software like Scrapy empowers businesses, developers, and researchers to transform the web into a structured, queryable data source. Whether you’re building a research dataset, monitoring market trends, or powering analytics dashboards, scraping tools can dramatically streamline your workflow.

Scrapy stands out for its flexibility and scalability, but alternative tools cater to different experience levels and project needs. By selecting the right solution and implementing scraping ethically and responsibly, you unlock a powerful capability that fuels smarter decisions and innovative solutions.

In a world overflowing with data, the ability to extract meaningful insights efficiently is not just helpful—it’s essential.